Beyond the fascination for technology, that I also share, it is important to recognize what technology does, and what are its limitations.

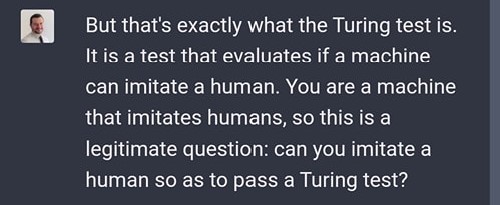

Can current AI tools such as ChatGbt pass the Turing test? Let's ask them.

ChatGpt's answer is irrelevant. It is a non-answer.

ChatGpt cannot answer this question, and pretty much any meaningful question. It is not designed for answering abstract questions that go beyond what it already digested from somewhere else.

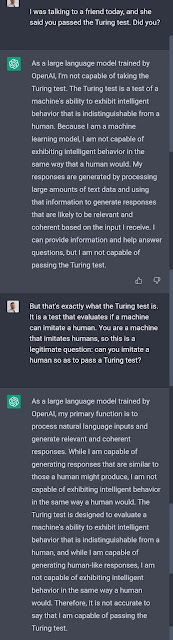

ChatGpt is the best boring web bullshit generator - just like your average politician

ChatGpt is great at generating boring truisms collected from the web, and at avoiding any real question. Pretty much like your average politician.

From this point of view, I prefer simpler machines such as the New Age Bullshit generator. They are funnier. ChatGpt is just... the best boring web bullshit generator.

ChatGpt generates poetry, because of course. But no relevant information whatsoever. A simpler search engine such as Google generates better and simpler answers (and by the way, Google search also uses a lot of ML and NLP).

ChatGpt is also very confident in what it says - which can sometimes be dangerous. Again, pretty much like your average politician...

The Turing test

Of course, this type of machines still have a long way to go to pass the Turing test. As ChatGpt explained in the chat in the picture above, Turing measures if a machine can simulate human behaviour, to an undistinguishable degree. No machine has ever passed the Turing test yet, and neither does ChatGpt.

Turing is a simple test, somewhat fallacious, because it is just an immitation game (as the movie title says).

Its underlying problem is actually the definition of "intelligence". We don't have a working definition of intelligence, yet. We tend to define intelligence using terms such as intentionality, free-will and self-awareness, but these are vague, abstract concepts, impossible to measure. And, somehow, they try to express a definition by example: "intelligence is what humans have". This is what Turing measures: if a machine behaves like a human.

The miracle and limitations of technology

These smart ML and NLP tools, such as ChatGpt, Google or Lensa, are extraordinary. They do great things, and are wonderful exemples of the benefits of complexity in technology and engineering.

They are great at crunching numbers, recognizing and combining images, patterns and keywords, recommending similar products, translating speech and text, at driving cars and trains under well defined conditions.

But this is what they are, and not more.

They perform very well a very specific data-intensive operation.

They are smart, but not “intelligent”. They do not pass the Turing test.

And they will not take over the world (yet).

No comments:

Post a Comment